Is DOOM doomed? Should we say our last words for Word? Will Mozilla be a dinosaur?

Is DOOM doomed? Should we say our last words for Word? Will Mozilla be a dinosaur?

These questions echoed through the Montpelier room of the Library of Congress earlier this week during Preserving.exe, a conference from 20-21 May on the challenges of keeping software alive for the long term. The National Digital Information Infrastructure and Preservation Program (NDIIPP) invited Still Water’s John Bell and Jon Ippolito to represent the University of Maine’s Digital Curation program in this gathering, which also included conservators, scholars, librarians, astrophysicists, and industry reps from Microsoft to Mozilla.

Seeing the allure of buzzy CRTs and knobby consoles, some librarians wondered whether they should be collecting hardware as well as CDs. Al Kossow of the Computer History Museum immediately offered a contrarian perspective: “Believe me, you don’t want to be a computer history museum.” Even contemporary storage media break regularly: 20% of the DVDs Paul Klamer rips for the Library of Congress (a loophole lets him do this legally) fail when first acquired.

Given the difficulty of keeping obsolete tape heads and floppy drives going, emulation was a clear frontrunner for the most promising strategy discussed at the summit. Ippolito cited the generational study conducted during the 2004 exhibition Seeing Double, which paired artworks running original hardware with their emulated doppelgangers. A visitor survey suggested younger people are more tolerant of changes in hardware look and feel, as long as the behavior or gameplay is emulated accurately.

Attendants demo’d some exciting new emulation services. Dan Ryan of CMU’s Olive project fought off demons in DOOM–played not in an emulator on his laptop, but streamed live from a server where the game ran under emulation in real-time. Jason Scott of the Internet Archive showed a cross-platform emulator under development called MESS, which is being ported to JavaScript thanks to the Mozilla emscripten framework. Doug Reside of the New York Public Library showed a Unity player that enabled Web visitors to manipulate a 3d view of the lighting for a particular performance. Tools like Unity, Olive, and JS MESS offer an easy way for anyone to run old software directly in their browser, without worrying about configuring an emulator or downloading a ROM on their own machine.

Emulation may have been Preserving.exe’s odds-on favorite as the preservation strategy of the future, but MIT’s Nick Monfort and Michael Mansfield of the Smithsonian’s American Art Museum warned that endearing or telling qualities of vintage hardware can be lost when old computers are simulated by software.

Emulation may have been Preserving.exe’s odds-on favorite as the preservation strategy of the future, but MIT’s Nick Monfort and Michael Mansfield of the Smithsonian’s American Art Museum warned that endearing or telling qualities of vintage hardware can be lost when old computers are simulated by software.

Mansfield described complex artworks by Jenny Holzer and Nam June Paik, whose remarkable retrospective is now on view at his museum. Monfort cited the idiosyncratic keyboard and fuzzy monitors of computers like the Commodore 64, scrutinized in the book 10 PRINT collaboratively authored by Monfort, Bell, and eight others.

Otto de Voogd and Robert Kaiser of Mozilla described Firefox’s policy of archiving the comments for every code change; as an open-source organization, Mozilla has no trade secrets to stand in the way of digital preservation. De Voogd invited others to download Mozilla’s code, asking only “please don’t make people come to a reading room to see it.” Fortunately, Ben Balter of Github was there to offer a tried-and-true repository for open code of all kinds, prompting one attender to coin a new standard for open data called TTG (“Time To Github”).

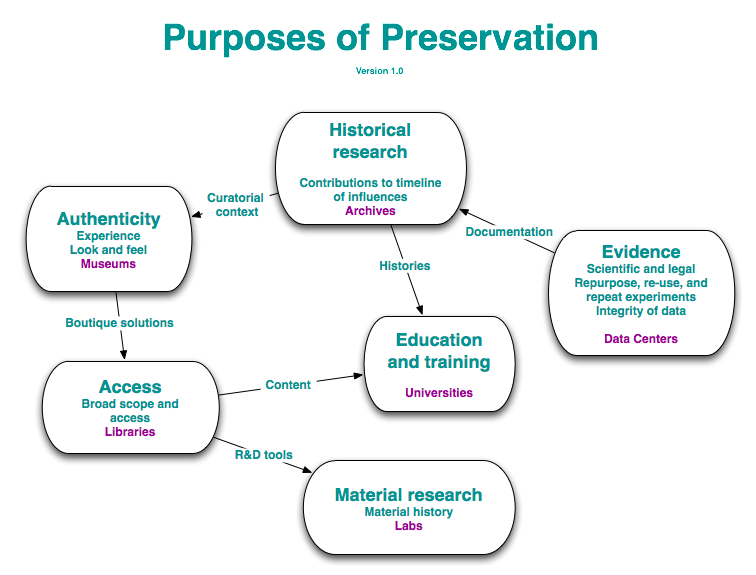

NDIIPP’s Bill LeFurgy and the Smithsonian’s Isabel Meyer contributed to a discussion on the varying aims of preservation, as represented in this diagram. The analysis covered how the goals of different organizations diverge (museums want authenticity, libraries want access) and converge (a digitizing technique developed by a museum conservator can make its way into a librarian’s toolkit).

Still Water’s John Bell described the Digital Curation graduate program, which trains fledgling preservationists on tools that help preserve hybrid media. Along with the Variable Media Questionnaire, Ippolito mentioned related innovations such as the variable media endowment and code escrow, as well as Re-Collection: Art, New Media and Social Memory, the 2014 MIT press book on digital preservation that he is co-authoring with Richard Rinehart.

Still Water’s John Bell described the Digital Curation graduate program, which trains fledgling preservationists on tools that help preserve hybrid media. Along with the Variable Media Questionnaire, Ippolito mentioned related innovations such as the variable media endowment and code escrow, as well as Re-Collection: Art, New Media and Social Memory, the 2014 MIT press book on digital preservation that he is co-authoring with Richard Rinehart.

ABOVE FROM TOP: The Library of Congress, photo by Peter Teuben; custom component of Robert Watts’ Cloud Music, from the Smithsonian American Art Museum; summit participants including Isabel Meyer, Megan Winget, and John Bell.

Report by @stillwaternet on #presoft: “The Ex-files: how long will our software last?” http://t.co/bxIam2VZ0S

@dougreside @nickmofo @americanart @benbalter @ottodv @blefurgy @textfiles @ndiipp @diglib @TheDeathlessMan all here: http://t.co/bxIam2VZ0S

#presoft coverage from @jonippolito: http://t.co/vE46ijctKg

RT @mkirschenbaum: #presoft coverage from @jonippolito: http://t.co/vE46ijctKg

Excellent summary MT @digitcurator: @stillwaternet on #presoft: “The Ex-files: how long will our software last?” http://t.co/HlZA34RSlk

How long will our software last? It will last as long as we need. As long as we want. It’s ours now, it’s up to us. http://t.co/XLIqO2nvBJ

“The Ex-files: how long will our software last?” @jonippolito’s take on the #presoft summit http://t.co/2ZJoBui669

RT @tjowens: “The Ex-files: how long will our software last?” @jonippolito’s take on the #presoft summit http://t.co/2ZJoBui669

RT @tjowens: “The Ex-files: how long will our software last?” @jonippolito’s take on the #presoft summit http://t.co/2ZJoBui669

Another interesting idea proposed at the meeting included a national registry for code similar to what has been established for film and recorded sound, http://www.loc.gov/film/filmnfr.html

http://www.loc.gov/rr/record/nrpb/registry/index.html

RT @tjowens: “The Ex-files: how long will our software last?” @jonippolito’s take on the #presoft summit http://t.co/2ZJoBui669

The sort of thing a Virtual Museum might have to keep in mind.

“Given the difficulty of keeping obsolete tape… http://t.co/kEa1wsEWeG

RT @anjacks0n: How long will our software last? It will last as long as we need. As long as we want. It’s ours now, it’s up to us. http://t…

RT @tjowens: “The Ex-files: how long will our software last?” @jonippolito’s take on the #presoft summit http://t.co/2ZJoBui669

RT @tjowens: “The Ex-files: how long will our software last?” @jonippolito’s take on the #presoft summit http://t.co/2ZJoBui669

RT @anjacks0n: How long will our software last? It will last as long as we need. As long as we want. It’s ours now, it’s up to us. http://t…

RT @tjowens: “The Ex-files: how long will our software last?” @jonippolito’s take on the #presoft summit http://t.co/2ZJoBui669

Authors teaching two of our online classes this fall! “The Ex-files: how long will our software last?” #presoft http://t.co/49RK4HKUlQ

Pingback: Home of KaiRo: Preserving Software: Museums, Archives, Libraries