In a world where a search box is usually the only way to enter an online archive, John Bell builds wrecking balls that tear down the walls between institutional silos. His latest project, a collaboration with Dartmouth and UMaine’s VEMI lab, has won a National Endowment for the Humanities grant to help scholars access and annotate historical film and television from archives across the globe.

In a world where a search box is usually the only way to enter an online archive, John Bell builds wrecking balls that tear down the walls between institutional silos. His latest project, a collaboration with Dartmouth and UMaine’s VEMI lab, has won a National Endowment for the Humanities grant to help scholars access and annotate historical film and television from archives across the globe.

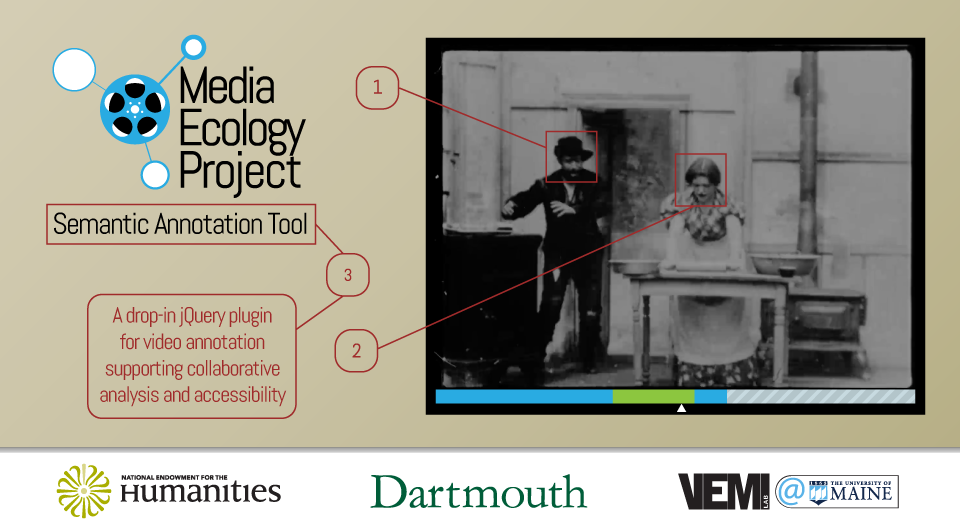

The two-year grant will support a collaboration among Bell, Dartmouth professor Mark Williams, and University of Maine’s Virtual Environmental and Multimodal Interaction (VEMI) Lab to build a Semantic Annotation Tool. SAT will be part of a suite of open-source research applications developed by William’s Media Ecology Project to enable creating, annotating, saving, and sharing clips of time-based media, whether a few seconds of footage or an entire film.

A Still Water Senior Researcher and architect of Dartmouth’s Academic Commons, Bell is an expert on the technology underpinning today’s online archives. As Digital Curation faculty, he also teaches the University of Maine’s online course in metadata, which begins this January 19th.

The SAT video annotation system Bell has designed has two parts: a drop-in jQuery plugin for marking up videos on the Web, and a server that aggregates and distributes annotations of online videos. Intended uses include collaborative close reading of video for humanities research, simplified coding of time-based documentation in social science studies, enhanced impaired vision accessibility for media clips on websites.

The SAT video annotation system Bell has designed has two parts: a drop-in jQuery plugin for marking up videos on the Web, and a server that aggregates and distributes annotations of online videos. Intended uses include collaborative close reading of video for humanities research, simplified coding of time-based documentation in social science studies, enhanced impaired vision accessibility for media clips on websites.

Consistent with the Still Water motto “Distribute and Connect,” SAT accumulates various users’ annotations on its own servers, all the while leaving the films in their original archives. Once SAT users download a plugin for their media players, they can see clips and notes created by other SAT users.

Archives that have expressed interest in this unique system include the Library of Congress’s paper print collection, UCLA’s Film and Television Archive, the Films Division of India, WGBH, and other archives in South Carolina, Georgia, Italy, Sweden, Brazil, and the Netherlands.

The University of Maine’s VEMI lab, which builds adaptive technologies and virtual environments for people with limited sight, hopes to combine SAT with machine learning to create descriptions of scenes for the visually impaired.

“A machine-vision program could scan a video, grab all of the places where it sees a particular face, or more generic objects in the scene, and then have an annotation that says this person is on camera right now, or there’s an airplane in the background,” says Bell. “The annotation tool itself is a foundational layer that will let us get to this.”

“[Mark and I] both see this as an interdisciplinary project–it’s not a film project that needs tech support or a tech project that’s searching for content to justify its existence,” says Bell. “It’s interdisciplinary work that draws ideas from many fields to create something new.”

RT @stillwaternet: John Bell (@nmdjohn) of @DigitCurator wins NEH grant for knocking down the silos between archives https://t.co/nKGWZtvywg

RT @stillwaternet: John Bell (@nmdjohn) of @DigitCurator wins NEH grant for knocking down the silos between archives https://t.co/nKGWZtvywg

RT @stillwaternet: John Bell (@nmdjohn) of @DigitCurator wins NEH grant for knocking down the silos between archives https://t.co/nKGWZtvywg

Alain Depocas liked this on Facebook.

Steve Anderson liked this on Facebook.

Rick Prelinger Abram Stern

Doug Sery liked this on Facebook.

Keith Frank liked this on Facebook.

Alessio Cavallaro liked this on Facebook.

Adrianne Laurel liked this on Facebook.

Abram Stern liked this on Facebook.

John Bell liked this on Facebook.

Michael Mittelman liked this on Facebook.

Mira Burack liked this on Facebook.

Margaretha Haughwout liked this on Facebook.

Marc Garrett liked this on Facebook.

Caitlin Jones liked this on Facebook.

Leslie Johnston liked this on Facebook.

Alexander Gross liked this on Facebook.

Patrik Johansson liked this on Facebook.

Craig Dietrich liked this on Facebook.

Karen Rowe liked this on Facebook.

Richard Rinehart liked this on Facebook.

Corey Butler liked this on Facebook.

Gaby Wijers liked this on Facebook.

David Rothenberg liked this on Facebook.

Larry Latour liked this on Facebook.

RT @stillwaternet: John Bell (@nmdjohn) of @DigitCurator wins NEH grant for knocking down the silos between archives https://t.co/nKGWZtvywg

Some goings-on of @stillwaternet’s @nmdjohn, “an expert on the #technology underpinning today’s online #archives” https://t.co/QgMEuVxM6M

RT @StillWaterWest: Some goings-on of @stillwaternet’s @nmdjohn, “an expert on the #technology underpinning today’s online #archives” https…

Oren Darling liked this on Facebook.